The global AI landscape just experienced a strategic shift in power. Chinese AI firm DeepSeek released a preview of its latest innovation, DeepSeek-V4 AI, a sophisticated model designed to challenge established industry leaders like ChatGPT and Gemini. Consequently, this release bridges the gap between closed-source giants and accessible, open-weight technology for developers globally.

Calibrated Performance and Architectural Precision

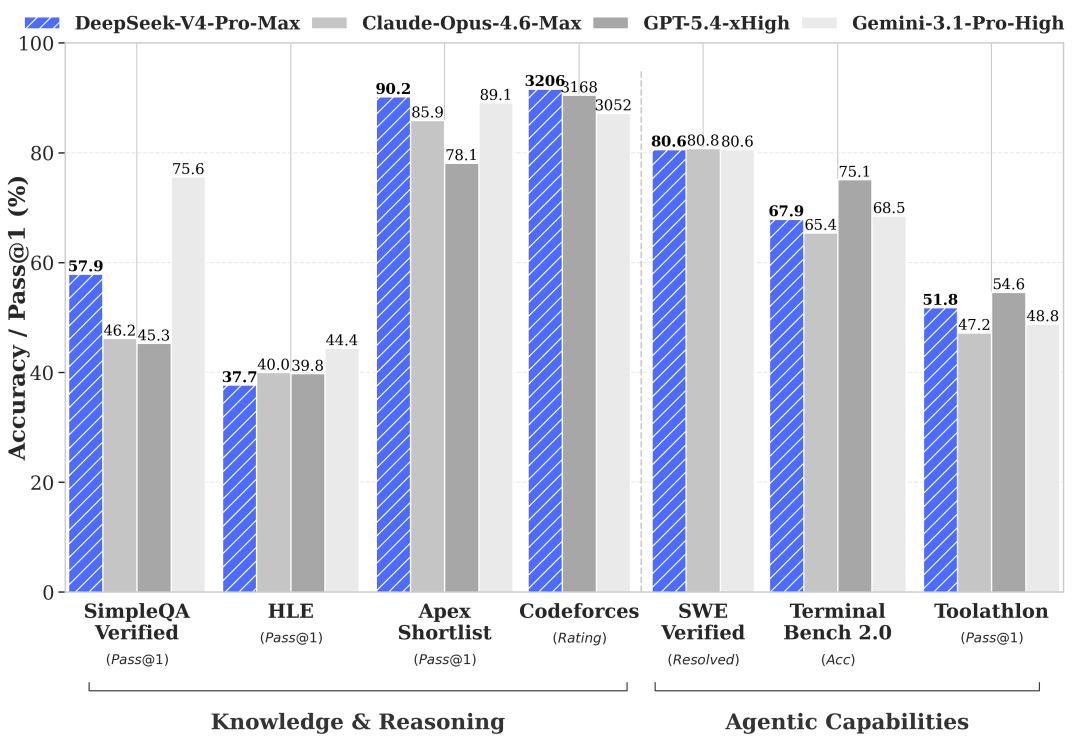

DeepSeek-V4 arrives in two distinct configurations: Expert and Instant. The V4-Pro model, which powers the Expert mode, demonstrates exceptional reasoning capabilities in mathematics, STEM, and coding environments. Furthermore, benchmarking data indicates that V4-Pro trails only Google’s Gemini-3.1-Pro in world knowledge assessments. This precision ensures that users receive high-tier output without the traditional reliance on proprietary American systems.

Strategic Access to DeepSeek-V4 AI

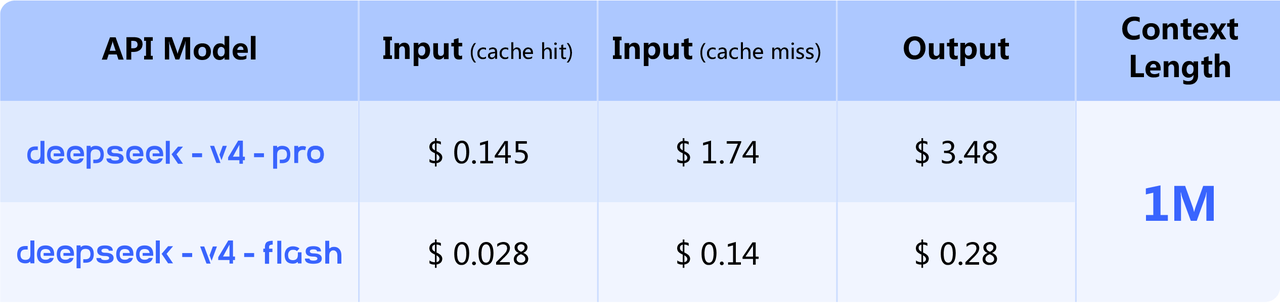

The “Instant” mode utilizes the V4-Flash model, which offers reasoning performance remarkably close to the Pro version. Both models support a massive 1 million token context window, allowing for the processing of extensive datasets. Moreover, the architecture utilizes a 1.6 trillion parameter design for the Expert model, while the Instant version functions on a 284 billion parameter baseline.

Decentralized Computing: The Open-Weight Advantage

DeepSeek-V4 AI is available as an open-weight model via Hugging Face. This strategic decision allows the global community to download and execute the model on private hardware. While full performance requires substantial resources, the community will likely develop distilled versions for consumer-level GPUs. Consequently, this approach decentralizes AI power, moving it from corporate servers into the hands of individual innovators.

The Translation

To understand the logic behind the numbers, we must look at “active parameters.” While the Expert model has 1.6 trillion total parameters, only 49 billion are “active” during any single task. This Mixtue-of-Experts (MoE) architecture optimizes VRAM usage. It allows the model to remain highly intelligent while significantly reducing the computational load compared to traditional “dense” models. It essentially means the AI only uses the “brain cells” it needs for a specific question.

The Socio-Economic Impact

For the Pakistani citizen, this development is a catalyst for digital sovereignty. Students and independent developers in Pakistan often face high costs when accessing premium closed-source APIs in foreign currency. Because DeepSeek-V4 AI is open-weight, local institutions can host these models on their own servers. This reduces costs, ensures data privacy, and provides world-class STEM tools to households that previously couldn’t afford a ChatGPT Plus subscription.

The Forward Path

This development represents a Momentum Shift in the global AI race. By providing near-top-tier performance in an open-weight format, DeepSeek is forcing the industry to move toward transparency and accessibility. For Pakistan, the strategic path forward involves investing in local GPU infrastructure to harness these models. We are moving from a phase of “AI Consumption” to a phase of “Local AI Implementation.”