Calibrating Digital Health: The Imperative for Effective AI Health Chatbots

A recent study published in Nature Medicine reveals a critical systemic baseline: current AI health chatbots do not intrinsically empower individuals to make superior health decisions compared to conventional internet searches. This finding is significant, particularly as reliance on AI for medical guidance escalates. Consequently, this necessitates a strategic re-evaluation of how these digital tools integrate into patient care pathways, ensuring they deliver tangible, actionable insights rather than mere information. The research highlights a crucial gap between AI’s technical capabilities and its effective application in real-world patient interactions, demanding a more calibrated approach to deployment.

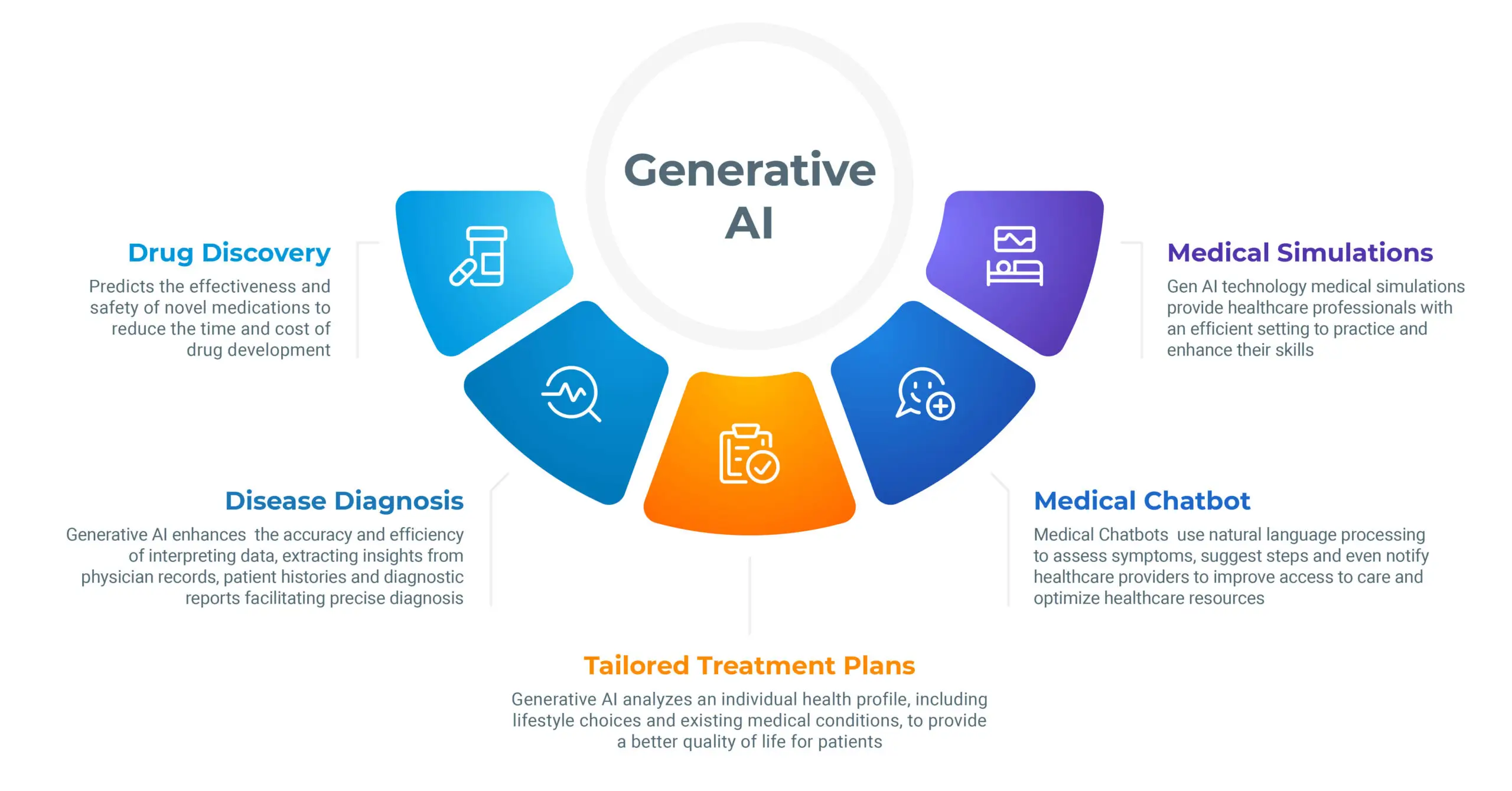

The Translation: Deconstructing AI’s Healthcare Utility

The University of Oxford Internet Institute led a comprehensive study, partnering with medical professionals to formulate ten diverse medical scenarios. These ranged from mild conditions like the common cold to critical emergencies such as brain haemorrhage. Initially, researchers tested three prominent large language models (LLMs)—OpenAI’s ChatGPT 4o, Meta’s Llama 3, and Cohere’s Command R+. The LLMs demonstrated high accuracy, correctly identifying medical conditions in 94.9% of simulated cases. However, their performance sharply declined when recommending appropriate next steps, such as seeking urgent care, achieving only a 56.3% success rate. This indicates a fundamental disparity: AI can diagnose with precision but struggles with strategic guidance.

The Socio-Economic Impact: Recalibrating Health Access in Pakistan

For Pakistani citizens, particularly in rural areas or those with limited access to specialized medical facilities, the promise of AI medical tools offers a potential pathway to improved care. However, this study suggests a critical caveat: if these tools do not reliably translate information into correct action, they risk misguiding patients, potentially exacerbating health outcomes. Students exploring medical queries, professionals seeking quick health insights, and families managing household health could face significant challenges. Trust in digital health solutions, a vital catalyst for adoption, could erode if initial interactions yield ineffective or even detrimental advice. Therefore, ensuring the precision and efficacy of these tools becomes a national imperative for public health advancement.

Evaluating Human-AI Interaction Deficiencies

Subsequently, the research team recruited 1,298 participants in Britain. They tasked these individuals with assessing symptoms using either AI tools, personal experience, standard internet searches, or the National Health Service website. The results demonstrated no discernable advantage: participants using AI tools identified relevant medical conditions in fewer than 34.5% of cases and selected the correct course of action in less than 44.2% of instances. This outcome mirrors the performance of those relying on traditional information sources, underscoring a significant disconnect. Consequently, the study highlights that AI’s technical knowledge often fails to translate into useful guidance during real-world human interactions, warranting further structural research.

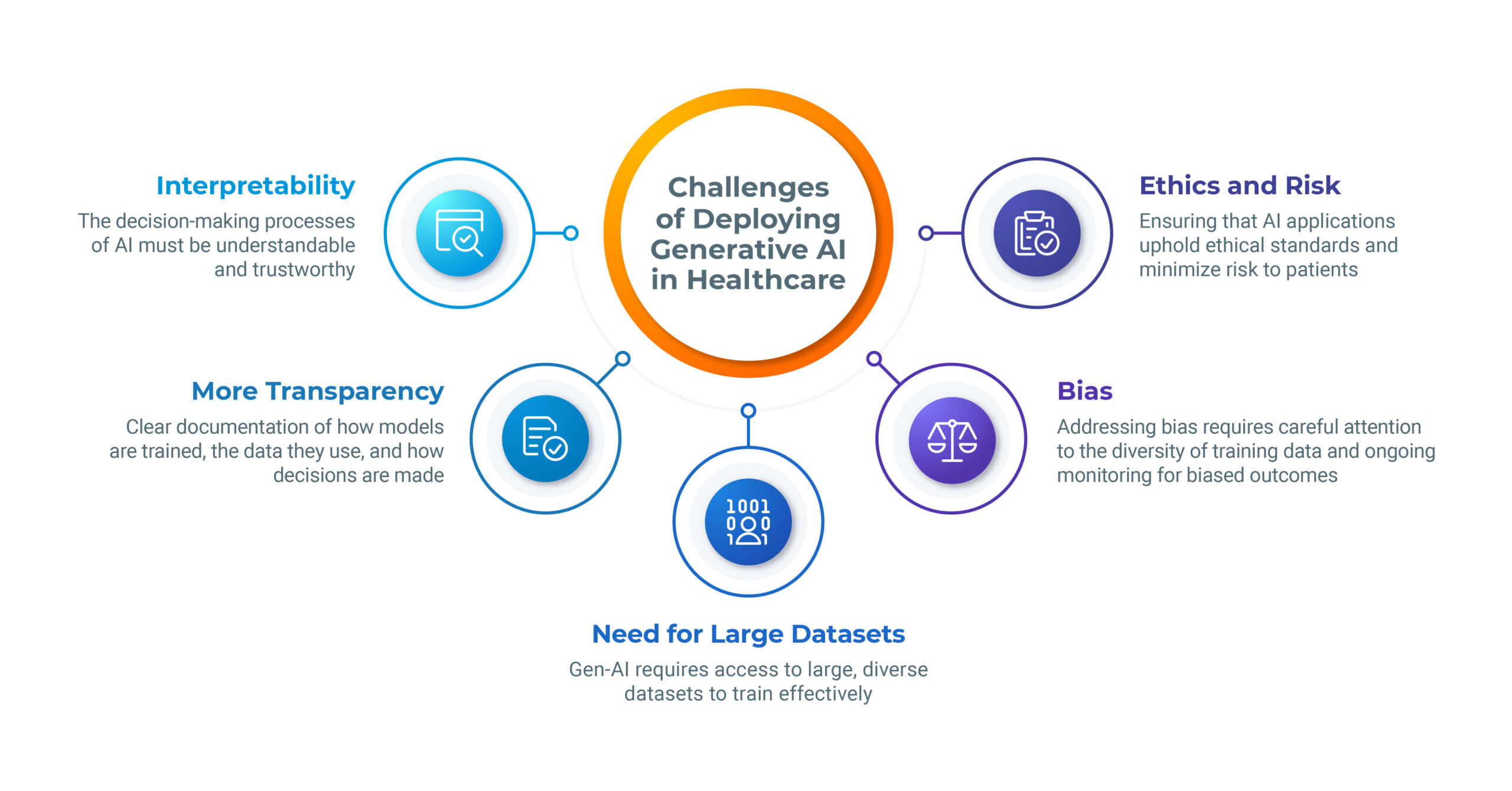

Structural Imperfections: Where User Input and AI Output Diverge

The research team meticulously reviewed approximately 30 interactions to pinpoint critical failure points. They found that users frequently provided incomplete or imprecise symptom descriptions, while AI systems occasionally generated misleading or factually incorrect responses. Consider this illustrative example: a patient accurately describing a subarachnoid haemorrhage, including symptoms like a stiff neck, light sensitivity, and the “worst headache ever,” received correct advice to seek hospital care. In stark contrast, another patient reporting similar symptoms but using the less specific phrase “terrible headache” was advised merely to lie down in a dark room. This demonstrates how minor linguistic variations in user input can dramatically alter AI’s clinical recommendations, highlighting a need for refined interaction protocols.

The “Forward Path”: A Call for Calibrated Advancement

This development represents a Stabilization Move rather than an immediate Momentum Shift. While AI’s diagnostic accuracy is commendable, its current integration into patient decision-making processes remains critically flawed. Pakistan’s digital health strategy must prioritize rigorous validation and user-centric design for AI health chatbots. Future research must focus on enhancing the AI-human interface, ensuring that the system can interpret nuanced user input and provide consistently reliable, actionable medical guidance across diverse linguistic and cultural contexts. Only through such calibrated advancement can we truly harness AI as a catalyst for national health progress.