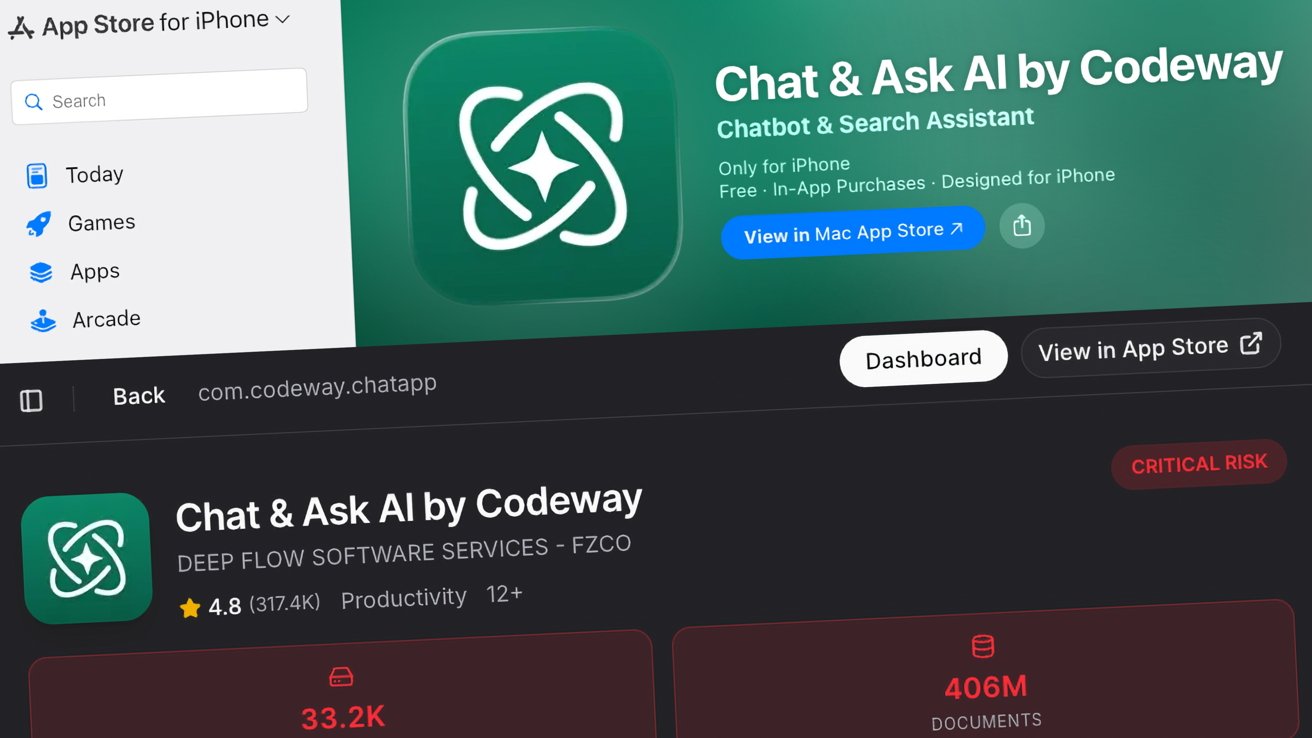

The rapid deployment of AI-generated applications has created a structural vulnerability in the global digital infrastructure. Security researchers recently discovered that over 5,000 web apps built with AI tools lack essential authentication systems. These platforms, designed for speed, inadvertently prioritized development velocity over data integrity. Consequently, sensitive medical, financial, and corporate records are now publicly accessible to anyone with a web address. This baseline failure highlights a critical need for calibrated security reviews in the age of automated coding.

The Structural Vulnerability of AI-Generated Applications

Researchers at RedAccess, led by Dor Zvi, analyzed thousands of applications constructed using platforms like Lovable, Replit, Base44, and Netlify. Their investigation revealed that roughly 40% of these identified apps exposed sensitive information. Specifically, the data included cargo records, corporate strategy documents, and chatbot logs containing customer contact details. Furthermore, some platforms allowed unauthorized administrative access, enabling malicious actors to gain total control over the systems. This lack of precision in security architecture represents a significant catalyst for potential cyber-attacks.

Why AI-Generated Applications Bypass Traditional Security

The rise of “vibe coding” allows employees to deploy tools without undergoing traditional development reviews or security checks. Because many AI development tools host applications directly on their own domains, researchers identified these vulnerable platforms through simple Google and Bing searches. Moreover, researchers found phishing websites hosted on legitimate AI domains that imitated global brands like Bank of America and FedEx. Consequently, the absence of a strategic oversight framework allows these AI-generated applications to bypass the rigorous protocols required for safe digital interaction.

The Situation Room Analysis

The Translation (Clear Context)

In technical terms, AI coding tools focus on functional logic—making the app “work”—but often ignore the “handshake” protocols of security. When a user prompts an AI to build a tool, the AI might forget to lock the back door. Therefore, these AI-generated applications exist in a “public by default” state. Unless a developer manually configures authentication, the data remains visible to anyone who knows the URL.

The Socio-Economic Impact

For the Pakistani citizen, this development is a high-risk scenario. As our local startup ecosystem adopts AI to accelerate growth, the risk of leaking CNIC data, banking details, or health records increases exponentially. Small businesses might adopt these tools to save costs, unintentionally exposing their customers to identity theft. This creates a trust deficit that could slow the national transition toward a fully digital economy.

The Forward Path (Opinion)

This situation represents a Momentum Shift that requires immediate stabilization. We cannot stop the evolution of AI-driven development, but we must mandate a “Security-First” baseline. Every organization using AI-generated applications must implement mandatory third-party security audits. Progress is only sustainable when the architecture supporting it is impenetrable. We must transition from “vibe coding” to “verified coding” to secure our digital frontier.